There are two primary ways to get a Network Attached Storage (NAS). You could buy an off-the-shelf NAS appliance. Alternatively, since a NAS is really just a PC with many hard disks, you could also make one out of a standard PC. I chose to go the latter path, building my own DIY NAS PC recently.

Over the last two months or so I’ve shared about individual PC components. This post is like a wrap up of the build, providing you the Bill of Materials (i.e. the “BOM”), so that you can find it a useful reference in case you wanted to build your own NAS.

Bear in mind that my objective is to run a NAS, primarily to provide storage for both local network use as well as remote DropBox-style private cloud storage. I also want to have a Linux machine around so that I can do various things with it. In the past, I ran CentOS on a physical host, and used Btrfs in it. But it was inconvenient to manage.

I liked the idea of software appliances. Early on, I considered FreeNAS and NAS4Free. They are both similar in many ways, most importantly providing network storage features out of ZFS-backed filesystems. They also provide hypervisor capabilities for running hardware virtual machines, though in different ways. This is hyper-convergence, the combination of storage and compute virtualisation, and it seemed like exactly what I needed.

FreeNAS emerged as my choice. I get my NAS, I get my Linux virtual machine(s), and I don’t need any extra boxes.

The hyper-converged appliance needn’t be very powerful. There’s no need for premium performance or the latest generation technology. But, at the same time, it’s important to be sufficiently current so that the hardware will continue to be useful for many years to come.

So here’s the BOM:

- Motherboard: ASRock B250M Pro4

- Processor: Intel Pentium G4560

- Memory: 2x Kingston DDR4 2400 MHz 16 GB KVR24N17D8/16 module

- Power Supply: Cooler Master V650 Semi-Modular

- Case: Fractal Design Define R5

- Boot Drive: SanDisk Ultra Fit Flash Drive (FreeNAS boot device)

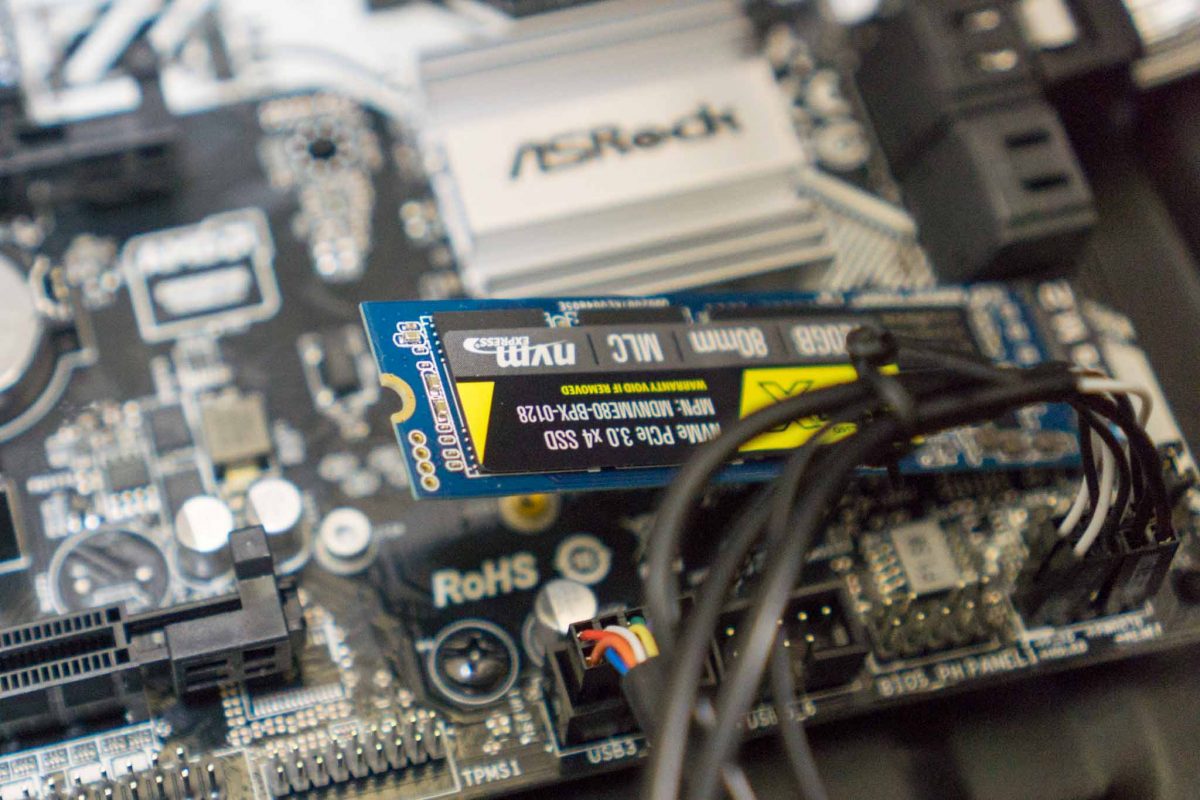

- NVMe: MyDigitalSSD BPX NVMe SSD (used for ZFS SLOG)

- Hard Disk Drive: 3x Seagate NAS IronWolf 4 TB

My reviews of the individual components are hyperlinked above.

One of the fears of being too cutting-edge on the hardware when choosing to run an appliance like FreeNAS, which is built on FreeBSD, is if the software can actually work with it. My BOM is for a Kaby Lake generation processor, and at it’s initial release, there were some troubles with FreeBSD support. Fortunately, while Kaby Lake still is the current latest generation, enough time has passed for FreeBSD, and then also FreeNAS, to support it.

This BOM works out very well. On hindsight, there is one thing I would have changed. It turns out that a 650 W power supply unit is really overkill. It’ll be fine to go with something much lesser, even if you’re going to pack in 10 spinning hard disk drives. I found out power consumption is quite minuscule these days.

I currently have FreeNAS setup with one Zpool, comprising a RaidZ1 vdev, and a dedicated SLOG device. The box serves out storage over SMB and AFP, the latter I’m planning to eventually phase out. There is automatic snapshots, and at some point I’m planning to either replicate out remotely with ZFS send/receive or a simple rsync.

RaidZ1 in ZFS means RAID5. There is one parity disk. A single disk failure is fine. The best practice calls for RaidZ2, which has two parity disks. I figured it would also be prudent to have an off-site backup, and if perhaps I had to budget for off-site resources, perhaps dual parity isn’t all that important. I’m not running such mission-critical operation, a bit of downtime is alright.

For my DropBox-style private cloud storage, I had previously been using ownCloud. I’ve decided to go with NextCloud this time. There is a NextCloud plugin for FreeNAS, but I was worried if the plugin would be kept current and up-to-date. I ultimately decided to run a Ubuntu virtual machine inside FreeNAS, and then within that Ubuntu instance, install NextCloud.

This is FreeNAS-11.0-U2, running my hyper-converged storage and compute.

I had thought of setting up a NAS, but with Dropbox being in the affordable range. There’s probably good resilience at minute cost